Why AI Automation Fails: The Mistakes Software Teams Make Too Early

Table of Contents

- Why AI Automation Fails So Often

- The Real Mistake Teams Make With AI

- Four Problems Early Automation Creates

- Signs a Workflow Is Not Ready for AI

- What to Fix Before You Automate

- Best Workflows to Automate First

- A Smarter AI Rollout Order

- Conclusion

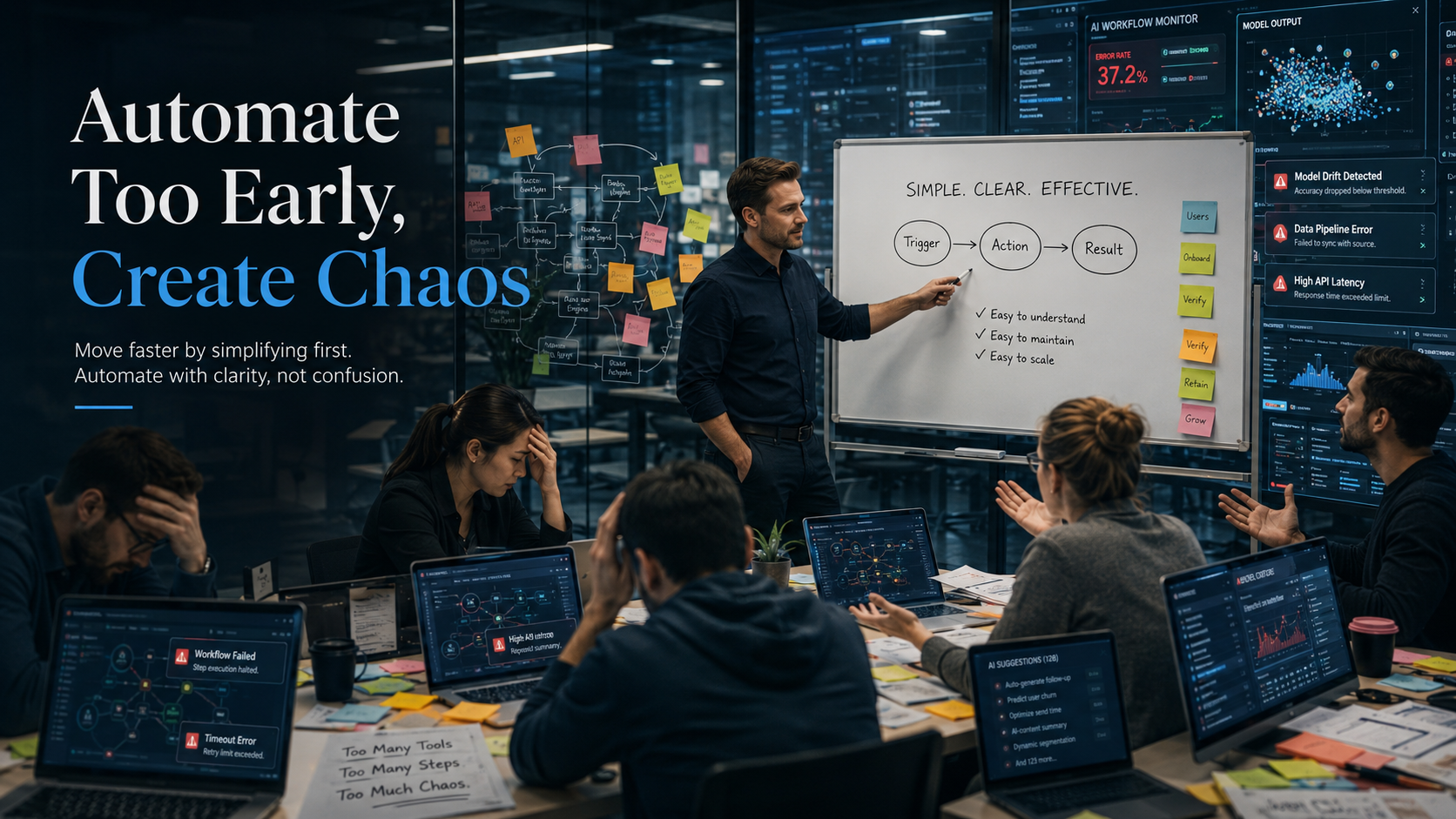

Why AI automation fails is rarely about choosing the wrong tool. The real reason is that teams try to automate workflows that are still unclear, inconsistent, or already broken. When you ask why AI automation fails in most software teams, the answer is almost always the same: AI was layered on top of a process that was never stable to begin with.

That sounds backward, because AI adoption is moving faster than most companies can govern it. Microsoft’s 2024 Work Trend Index found that 75% of knowledge workers already use AI at work, usage had nearly doubled in six months, and 46% of users had started using it less than six months earlier. The same research found that many leaders still worry their companies lack a clear vision and plan for AI implementation.

That gap is where confusion starts. Teams feel pressure to “do something with AI,” so they begin automating before they have agreed on how work should actually happen:

- They automate tasks before defining ownership.

- They add AI summaries before fixing documentation quality.

- They generate outputs faster before agreeing on what “good” looks like.

- They scale speed before they scale clarity.

Why AI Automation Fails So Often

Most teams think AI will clean up messy work. In practice, AI usually scales whatever already exists. If the process is weak, AI rarely fixes it. More often, it gives the weakness a cleaner interface and a faster output cycle.

Understanding why AI automation fails starts with recognizing this pattern:

- Vague documentation becomes vague AI summaries.

- Weak handoffs become faster weak handoffs.

- Unclear approval chains become faster approval confusion.

- Constant process changes become automated instability.

People say things like “the tool is smart,” “it already generates the draft,” or “we can automate this whole thing” — but those statements ignore the real question: what exactly is being automated?

If the answer is “a process that still depends on tribal knowledge, exceptions, or guesswork,” then the team is not automating a system. It is automating uncertainty. This same dynamic drives why communication plans fail in software teams — scattered information and undefined ownership cannot be fixed by adding speed.

McKinsey’s 2025 State of AI research confirms this: workflow redesign had the strongest relationship with whether organizations saw EBIT impact from generative AI, yet only 21% of organizations using gen AI said they had fundamentally redesigned at least some workflows. Most organizations are still layering AI onto existing ways of working instead of rebuilding the workflow itself.

Four Problems Early Automation Creates

When teams automate too early, they usually create four problems at once.

First: they automate exceptions instead of standards. The process looks repeatable on the surface, but under the hood it depends on special cases, unwritten judgment, and missing rules. The AI output looks complete, someone notices it missed context, another person edits it manually, a third person questions the source, and the team still needs human cleanup — but now the cleanup is hidden later in the workflow.

Second: broken ownership. AI can generate a draft, but it cannot remove the need for accountability. When nobody is clearly responsible for validating, correcting, approving, or escalating the output, the team mistakes motion for progress.

Third: noise. Instead of reducing admin work, teams create parallel streams — AI meeting notes, AI status updates, AI sprint recaps, AI-generated action items, AI ticket summaries. Individually useful. Collectively, they create a trust problem: the team no longer knows which version matters or what is reliable enough to act on.

Fourth: confusing output with value. A team can produce more drafts, updates, summaries, and tickets without improving delivery speed, alignment, decision quality, stakeholder trust, or execution clarity. Fast confusion is still confusion.

Signs a Workflow Is Not Ready for AI

Before you automate a task, ask one question: could a new team member follow this process without guessing? If the honest answer is no, the workflow is not ready. These are the clearest warning signs:

- Two people describe the same process in different ways.

- Important context lives in Slack, meetings, or memory instead of one source of truth.

- The team changes the sequence every sprint.

- Nobody agrees on what a “done” output looks like.

- Errors are discovered late by a different team downstream.

- Approval steps are implied rather than explicit.

- The process depends heavily on one experienced person filling in the gaps.

- People want AI to replace a step they still cannot clearly explain themselves.

What to Fix Before You Automate

The correct order is: stabilize, standardize, then automate.

Start with the exact job to be done. Define the task in operational language — not “improve sprint planning” but “turn backlog changes into a weekly stakeholder summary using a fixed format with owner names, blockers, next actions, and delivery risk, pulled from agreed sources only.”

Then fix the input layer. AI cannot create a clean output from scattered, incomplete, or contradictory inputs. The best way to document recurring team knowledge with AI covers exactly how to build that clean input layer before automation begins.

Next, fix ownership. Every automation needs a human role attached — someone clearly accountable for reviewing the output, approving it, correcting errors, spotting exceptions, and deciding when the automation should not be used.

Finally, define the quality bar. What makes this output usable? What requires a rewrite? What must always be checked by a human? If those answers are still fuzzy, the workflow is still immature.

Best Workflows to Automate First

The best first automations are boring, repetitive, and low-risk. Good early use cases create confidence without creating hidden damage. This is also where why AI automation fails becomes most visible — teams skip the boring, safe automations and go straight for complex, high-stakes ones before their processes are ready.

| Good First Automations | Bad First Automations |

|---|---|

| Meeting notes cleanup | Full strategic decision-making |

| Weekly update drafts | Auto-writing stakeholder messaging without review |

| Ticket tagging and categorization | Letting AI prioritize the roadmap alone |

| Internal FAQ responses | Making end-to-end customer promises without review |

| Handoff preparation and recap generation | Replacing product judgment on scope |

For the specific AI tools that handle these tasks well inside a PM workflow, see How to Automate Project Status Updates: 3 AI Tools That Do the Heavy Lifting.

A Smarter AI Rollout Order

- Map the workflow in plain language.

- Remove unnecessary steps.

- Define one source of truth.

- Standardize the inputs and outputs.

- Assign one clear human owner.

- Add AI to one narrow repetitive step.

- Review quality for two weeks.

- Measure whether confusion actually decreased.

- Expand only after the workflow proves stable.

Conclusion

Microsoft’s research shows employees are adopting AI quickly, often faster than organizations are formally structuring its use. McKinsey’s research shows that redesigning workflows matters more for value creation than simply adding gen AI on top of existing processes.

The smartest teams do not ask “what can we automate today?” They ask: what part of this workflow is stable enough to automate? Where do we have repeated manual effort with clear inputs? Where will AI reduce friction without hiding accountability? How will we know the automation made the system better, not just faster?

That is the real answer to why AI automation fails — not a bad tool choice, but a premature one. Once your workflows are ready, the next step is choosing the right stack. See AI Tools for Project Managers: Build a Lean Stack That Actually Works for a structured breakdown of what belongs in a lean, high-signal PM stack.